One of the benefits of using a tag management system like DTM is the ability to lighten the load on your page by moving tracking pixels into DTM. Now, simply moving code into DTM may not improve page performance- there are best practices you need to follow to get the most out of what DTM can offers.

1. Decide on the scope

When the DTM library loads, it defers as much code as possible to later in the page. In order to map out what should run where it must run through each of your rule conditions and see which conditions are currently met. That means that additional rules and additional conditions will actually slow down the synchronous part of your DTM library. When possible, don’t create a new rule for each new tag, but rather, have rules be specific to their condition. I have a partner post about how to improve page performance when planning out your rules, but for now, try to start thinking of your rules in terms of the user action- have one rule for when the user sees a product details page, for example, rather than a series of Product Details Page rules, each with a different tag.

2. Decide which type of DTM script to use

Since 3rd party Tag vendors generally deliver their code in HTML form, intended to be pasted directly into your page, there are usually a few changes you need to make before DTM can fire the code non-sequentially. What you do varies by the tag.

Before proceeding, you need to decide: should you use non-sequential HTML or non-sequential javascript? DTM loads non-sequential HTML by setting it in a side <iframe> so it can load the content without blocking anything else. This can work well, but has some downsides: that iframe can’t get all the same information the parent page can. This includes many data elements- for security reasons, it can’t reference a data element that pulls from Custom JS or JS objects. If you’re referencing a data element, you need to use “%dataElementName%” rather than “_satellite.getVar(“dataElementName”)”. This isn’t the most supported usage, so definitely test it thoroughly.

3. Remove unneeded pieces

Next, remove any <noscript> tags: they won’t do any good in a Javascript-based framework like DTM. These days, these tags aren’t really needed and may actually inflate your data because the only folks who don’t have JavaScript enabled are bots.

4. Convert the code to suit your script type

Next, convert the code to the appropriate format (see below) and add it in a rule as a Third Party Tag.

Purely Script

Some tags are already just javascript. For instance, take this code from facebook:

<!-- Facebook Pixel Code -->

<script>

!function(f,b,e,v,n,t,s){if(f.fbq)return;n=f.fbq=function(){n.callMethod?n.callMethod.apply(n,arguments):n.queue.push(arguments)};if(!f._fbq)f._fbq=n;

n.push=n;n.loaded=!0;n.version='2.0';n.queue=[];t=b.createElement(e);t.async=!0;

t.src=v;s=b.getElementsByTagName(e)[0];s.parentNode.insertBefore(t,s)}(window,

document,'script','//connect.facebook.net/en_US/fbevents.js');

fbq('init', '123456789123');

fbq('track', 'PageView');

</script>

<noscript>

<img height=""1"" width=""1"" style=""display:none""

src=""https://www.facebook.com/tr?id=1552630471664132&ev=PageView&noscript=1""

/></noscript>

<!-- End Facebook Pixel Code -->

I would remove the <script> tags, the <noscript> portion, and HTML comments, and paste it directly as JavaScript:

!function(f,b,e,v,n,t,s){if(f.fbq)return;n=f.fbq=function(){n.callMethod?

n.callMethod.apply(n,arguments):n.queue.push(arguments)};if(!f._fbq)f._fbq=n;

n.push=n;n.loaded=!0;n.version='2.0';n.queue=[];t=b.createElement(e);t.async=!0;

t.src=v;s=b.getElementsByTagName(e)[0];s.parentNode.insertBefore(t,s)}(window,

document,'script','//connect.facebook.net/en_US/fbevents.js');

fbq('init', '123456789123');

fbq('track', 'PageView');

I know Facebook and Chango are two tag types that fall in this category.

Simple Pixel

The easiest of pixels are those that just require the loading of a tiny invisible image. For instance, let’s say I got the following Yahoo dot code:

<img src="https://sp.analytics.yahoo.com/spp.pl?a=123456789&.yp=98765&js=no" height="0" >

If I want to load an image like this asynchronously, I could paste it in, unchanged, as a non-sequential HTML third party tag. As mentioned earlier, this would create an iframe that loads on the side of the page, so as to not slow down page performance.

I could also add it as a non-sequential javascript third party tag using document.body.appendChild to append it to the body, whether it has finished loading or not. This also makes it so you can add these pixels in event-based rules on SPAs or post-page-load user actions.

var dcIMG = document.createElement('img');

dcIMG.setAttribute('src', 'https://sp.analytics.yahoo.com/spp.pl?a=123456789&.yp=98765&js=no');

dcIMG.setAttribute('height','1');

dcIMG.setAttribute('width','1');

dcIMG.setAttribute('border','0');

dcIMG.setAttribute('style','display:none');

document.body.appendChild(dcIMG);

To my knowledge, Yahoo Dot, Bing, Vibrant/Intellitxt, Gumgum are examples of simple pixel code vendors.

Pixel with query params

Some vendors have simple pixels, with query parameters in the src url that helps tell the vendor what they need to know. (Coincidentally, this approach is the approach used by Adobe Analytics.) Let’s say I got this code from Doubleclick:

<!--<script type="text/javascript">

var axel = Math.random() + "";

var a = axel * 10000000000000;

document.write('<iframe src="//0.fls.doubleclick.net/activityi;src=123456789;type=clientsale;qty=1;cost=[Revenue];ord=[OrderID]?" width="1" height="1" frameborder="0" style="display:none"></iframe>');

</script>

<noscript>

<iframe src="//0.fls.doubleclick.net/activityi;src=123456789;type=clientsale;qty=1;cost=[Revenue];ord=[OrderID]?" width="1" height="1" frameborder="0" style="display:none"></iframe>

</noscript>

<!-- End of DoubleClick Floodlight Tag: Please do not remove -->

I could add this to my site like this, using data elements in place of [Revenue] and [OrderID]:

var axel = Math.random() + "";

var a = axel * 10000000000000;

var dcIMG = document.createElement('iframe');

dcIMG.setAttribute('src', "//0.fls.doubleclick.net/activityi;src=123456789;type=clientsale;qty=1;cost="+ _satellite.getVar('purchase: revenue') +";ord="+ _satellite.getVar('purchase: order id') +"?");

dcIMG.setAttribute('height','1');

dcIMG.setAttribute('width','1');

dcIMG.setAttribute('Border','0');

dcIMG.setAttribute('style','display:none');

document.body.appendChild(dcIMG);

Pixels with Script tags

Many vendors require you to add their javascript file to your site, as well as set some variables.

For instance, I might get this code from twitter:

<!-- Twitter single-event website tag code -->

<script src="//platform.twitter.com/oct.js" type="text/javascript"></script>

<script type="text/javascript">twttr.conversion.trackPid('123456', { tw_sale_amount: 0, tw_order_quantity: 0 });</script>

<noscript>

<img height="1" width="1" style="display:none;" alt="" src="https://analytics.twitter.com/i/adsct?txn_id=l5jl4&p_id=Twitter&tw_sale_amount=0&tw_order_quantity=0" />

<img height="1" width="1" style="display:none;" alt="" src="//t.co/i/adsct?txn_id=l5jl4&p_id=Twitter&tw_sale_amount=0&tw_order_quantity=0" />

</noscript>

<!-- End Twitter single-event website tag code -->

So there is a script that needs to run, then script that needs to fire afterwards. We can remove the noscript portions, but we still need to figure out a way to get the .js script to run before I fire twitter.conversion. There aren’t a lot of options for running a .js file after a page has loaded- if you have jQuery, you can use $.ajax, but if you don’t have jQuery you can use the below to append the .js file, make sure it has completed, then run twttr.conversion:

var dcJS = document.createElement('SCRIPT');

var done = false;

dcJS.setAttribute('src', '//platform.twitter.com/oct.js');

dcJS.setAttribute('type','text/javascript');

document.body.appendChild(dcJS);

dcJS.onload = dcJS.onreadystatechange = function () {

if(!done && (!this.readyState || this.readyState === "loaded" || this.readyState === "complete")) {

done = true;

callback();

// Handle memory leak in IE

dcJS.onload = dcJS.onreadystatechange = null;

document.body.removeChild(dcJS);

}

};

function callback(){

if(done){

twttr.conversion.trackPid(pid,{

tw_sale_amount:_satellite.getVar('purchase: total revenue'),

tw_order_quantity:_satellite.getVar('purchase: total units')

})

}

}

Google Adwords/Remarketing, Twitter, Linkedin, and Eloqua can all fit this general idea.

5. Validate the tag

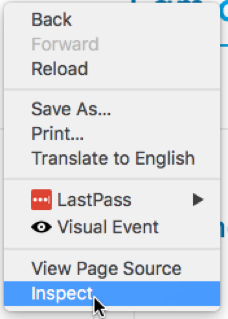

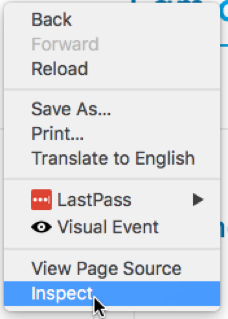

There are many tools on the internet for validating tags are working. Some vendors, like Google, may have their own tool, but most tools can be validated either within a Developer Console or by using tool-agnostic tools like Ghostery or the chrome Observepoint plugin. Most methods require opening a developer console. An easy way to do this in most browsers is to right-click anywhere on the page and select the “Inspect” option. For instance, in Chrome:

First, check there are no errors in the console. Then, to validate a specific tag:

Using the Observepoint plugin: Within the developer console, go to the “Observepoint” tab:

Click the “Recording” button, then refresh the page. Observepoint should show every tracking technology running on the page, including the one you are validating.

Using the built-in tools in your browser: Open the network tab, then refresh the page. Often you can search for the name of the tag- for instance, here I’m searching for “twitter”, and it shows me that data was sent to twitter:

Other vendors use slightly more disguised names. Check with the vendor if you need more details on how to validate.