(This is cross-posted from the 33 Sticks blog)

Almost every organization that uses Digital Analytics has some combination of the following documents:

- Business Requirements Document

- Solution Design Reference/Variable Map

- Technical Specifications

- Validation Specifications/QA Requirements

All of the consulting agencies use them and may even have their own special, unique versions of them. They’re often heavily branded and may be closely guarded. And it can be very difficult to find a template or example as a starting point.

In my 11 years as a consultant who focuses on Digital Analytics implementation, I’ve been through MANY documentation templates. I’ve used the (sometimes vigorously enforced) templates provided by whoever was my current employing agency; I’ve used client’s existing docs; I’ve created and recreated my own templates dozens of times. I’ve used Word documents, Google Sheets, txt/js documents, Confluence/Wiki pages, and (much to my chagrin) Powerpoint presentations (there’s nothing wrong with Powerpoint for presentations, but it really isn’t a technical documentation tool). I’ve in turn shared out templates and examples both within agencies and more broadly in the industry, and I’ve now decided it’s time to stop hoarding documentation templates and examples, and share them publicly in a git repo, starting with the most foundational: the Variable Map (sometimes called a Solution Design Reference, or SDR).

The Variable Map

You may or may not have a tech spec or a business requirements document, but I bet you have a document somewhere that lays out the mapping of your custom dimensions and metrics. Since Adobe Analytics has up to 250 custom eVars, 75 custom props, and up to 1000 custom events, it’s practically essential to have a variable map. In fact, the need for a quick reference is why I created the PocketSDR app a few years ago (shameless plug) so you could use your phone to check what a variable was used for or what its settings are. But when planning/maintaining an implementation, you need a global view of all your variables. This isn’t a new idea: Adobe’s documentation discusses it at a higher level, Chetan Gaikwad covered it on his blog more recently, Jason Call blogged about this back in 2016, Analytics Demystified walked through it in 2015, and even back in 2014, Numeric Analytics discussed the importance of an SDR. Yet if you want a template, example, or starting point, you still generally have to ask colleagues and friends to pass you one under the table, use one from a previous role/org, or you just start from scratch. This is why 33 Sticks is making a generic SDR publicly available on our git repo.

The cats out of the bag

There are various reasons folks (including me) haven’t done this in the past. For practitioners, there is (understandably) a hesitance to share what you are doing- you might not want your brand to be associated with how good/not good your documentation is, and/or you may not want to give your competitors any “help” (more on that in an upcoming podcast). For agencies, there may be a desire to not show “the man behind the curtain”, or they may believe (or at least want their clients to believe) that their documentation is special and unique.

So why am I sharing it?

- Because I don’t think the format of my variable map is actually all that special- a variable map is a variable map (but that doesn’t mean we should all have to start from scratch every time). This isn’t intended to be the end-all-be-all of SDRs, but rather, as a starting point or an example for comparison. Any aspect of it might be boring/obvious to you, overkill, or just not useful, but my hope is that there is at least a part of it that will be helpful.

- Because while I DO think I have some tricks that make my SDRs easier to use, I recognize that most of those tricks are things I only know or do because someone in the industry has shared their knowledge with me, or a client let me experiment a bit to find what worked best.

- Because 33 Sticks doesn’t bill by the hour and I have no incentive to hoard “easy”/low-level tasks from my clients. If a client comes to me and says “I used your blog post to get started on an SDR without you”, I can say, “Great! That leaves us more time to get into more strategic work!”

- Because where I can REALLY offer value as a consultant isn’t in the formatting/data entry part of creating an SDR, but rather, in the thought we put into designing/documenting solutions, and in how we help clients focus on goals and getting long-term value out of data so they don’t get stuck in “maintenance mode.”

- And finally, because I’m hoping to hear back from folks and learn from them about what they’re doing the same or differently. We all benefit from opening up about our techniques.

Enough with the pedantry, let’s get back to discussing how to create a good SDR.

SDR Best Practices

It’s vitally important you keep your variable map in a centrally-accessible location. If I update a copy of the SDR on my hard drive, that doesn’t benefit anyone but me, and by the time I merge it back into the “global” one, we may already have conflicts.

It should go without saying, but keeping it updated is also a good idea. I actually view most types of Digital Analytics documentation as fitting into one of two categories: primarily useful at the beginning of an implementation, OR an ongoing, living document. Something like a Business Requirements Document COULD be a living document, but let’s be honest: its primary value is in designing the solution, and it can have a high level of effort to keep it comprehensively up-to-date. Technical specifications for the data layer are usually a one-time deal: after it is implemented, it goes into an archive somewhere. But the simple variable map… THAT absolutely should be kept current and frequently referenced.

Tools for Creation/Tracking

If you’re already using Adobe Analytics, then you probably need to get an accurate and current list of your variables and their settings. Even if you have an SDR, you should check if it matches what’s set up in your Analytics tool. You could always export your settings from within the Admin Console, but I’ve found the format makes the output very difficult to use. I’d recommend going with one of the many other great industry tools (all of which are free):

- Adobe Consulting has a Health Dashboard that uses the Admin and Reporting APIs to not only pull down your settings, but show you the top values for each report and highlights recent changes in data.

- Observepoint Labs has a Google Sheets add-in that can generate an SDR for you (you can even use it to update your admin settings through the Adobe Analytics Admin API).

- Acronym has a handy Report Suite exporter tool.

- My friend/former colleague Gene Jones has a great tool for exporting your Report Suite Settings.

- If you use the Adobe Experience Cloud Debugger chrome extension and are logged in to the Experience Cloud, it will show you the friendly names of your variables directly in your beacon.

These tools are great for getting your existing settings, but they don’t leave a lot of room for planning and documenting the full implementation aspects of your solution, so usually I use these as a source to copy my existing settings into my SDR.

What should an SDR contain?

On our git repo, you’ll see an Excel sheet that has a generic example Variable Map. Even if you have an SDR that you like already, take a look this example one- there may be some items you might get use of (plus this post will be much more interesting if you can see the columns I’m talking about).

Pretty much ALL Variable Maps have the following columns (and mine is no different):

- Variable/Report Name (eg, “Internal Search Terms”)

- Variable/Report Number (eg, “eVar1”)

- Description

- Example Value

- Variable Settings

But over the years I’ve found a few other columns can really make the variable map much more use-able and useful (and again, this all may make more sense if you download our SDR to have an example):

A “Sorting Order” or Key

If, like me, you love using tables, sorting, and filtering in Excel, you may discover that Excel doesn’t know how to sort eVars, props and events: because it isn’t fully a number or string, it thinks that the proper order is “eVar1, eVar10, eVar2, eVar20”. So if you’ve sorted for some small task and want to get back to a sensible order, you pretty much have to do things manually. For this reason, I have a simple column that has nothing other than numbers indicating my ideal/proper/default order for my table.

Param

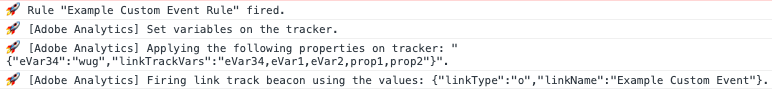

This is for those who live their lives in analytics beacons rather than reports, like a QA team. It’s nice to know that s.campaign is the variable, and it is named “Tracking Code” in the reports, but it’s not exactly obvious that if you’re looking in a beacon, the value for s.campaign shows in the “v0” parameter.

Variable Type

Again, I love me some Excel filtering, and I like being able to say “show me just my eVars” or “show the out of the box events”. It can also be handy for those not used to Adobe lingo (“what the heck is an eVar?”). So I have a column with something like the following possible values:

- eVar- custom conversion dimension

- event- custom metric (eg “event1”)

- event- predefined metric (eg “scAdd”, “purchase”)

- listVar- custom conversion dimension list (eg, “s.list1”)

- predefined conversion dimension (eg, “s.campaign”)

- predefined traffic dimension (eg, “s.server”)

- products string

- prop- custom traffic dimension

For things like this, where I have specific values I’ll be using repeatedly, I’ll keep an extra tab in the workbook titled “worksheet config”. Then I can use Excel’s “data validation” to pull a drop-down list from that tab.

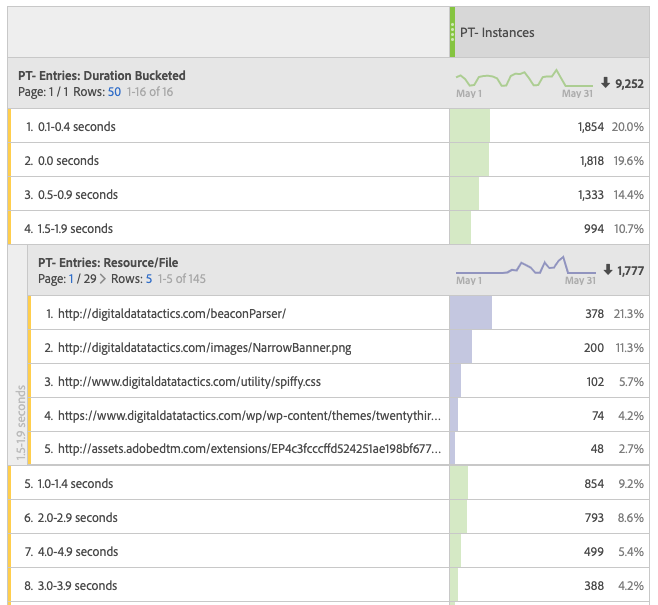

Category

This is my personal favorite- I use it every day. It’s a way to sort/group variables and metrics that are related to each other- eg, if you are using eVar1, eVar2, prop1, event1, and event2 all in some way to track internal search, it’s nice to be able to filter by this column and get something like this:

The categories themselves are completely arbitrary and don’t need to map to anything outside of the document (though you might choose to use them in your Tech Spec or even in workspaces). Here’s a standard list I might use:

- Content Identification

- Internal Search

- Products

- Checkout

- Visitor Segmentation

- Traffic Sources

- Authentication

- Validation/Troubleshooting

Again, I could create/maintain this list in a single place on my “worksheet config” tab, then use “Data Validation” to turn it into a drop-down list in my Variable Map.

Status

This basically answers the question “is this variable currently expected to be working in production?” I usually have three possible values:

- Implemented

- Broken (or some euphemism for broken, like “needs work”)

- In progress

Data Quality Notes

This is for, well, notes on Data Quality. Such as “didn’t track for month of March” or “has odd values coming from PDP”.

Last Validated

This is to keep track of how recently someone checked on the health of this variable/metric. The hope is this will help prevent variables sitting around, unused, with bad data, for months or even years. I even add conditional formatting so if it has been more than 90 days, it turns red.

Scope

Where would I expect to see this variable/metric? Is it set globally? Or maybe it happens on all cart adds?

Description

I’m certainly not unique in having this column, and I’m probably not unique in how many SDRs I’ve seen where this column has not been kept up-to-date. I’d like to stress the importance of this column, though- you may think the purpose of a variable is obvious, but almost every company I’ve worked with has at least one item on their variable map where no current member of the analytics team has any idea what the original goal was.

Ideally, the contents of this column would align with the “Description” setting within the Admin Console for each variable, so that folks viewing the reports can understand how to use the data.

We ARE all setting and using those descriptions, right? RIGHT?

Logic/Formatting and Example Value

Your Variable Map needs to have a place to detail the type of values you’d expect in this report. This:

- helps folks looking at the document to understand what each variable does (especially if you don’t have stellar descriptions)

- lets developers/implementers know what sort of data to send in

- provides a place to make sure values are consistent. For instance, if I have a variable for “add to cart location”, there’s no inherent reason why the value “product details page” would be more correct than “product view”… but I still only want to see ONE of those values in my report. If folks can look in my variable map and see that “product details page” is the value already in use, they won’t go and invent a new value for the same thing).

I often find it a good exercise to run the Adobe Consulting Health Dashboard and grab the top few values from each variable to fill out this column.

Source Type

What method are we using to get the value for the data layer? I usually use Excel Data Validation to create this list:

- query param

- data layer

- rule-based/trigger-based (like for most events, which are usually manually entered into a TMS rule based on certain conditions)

- analytics library (aka, plugins)

- duplicate of another variable

Dimension Source or Metric Trigger

This contains the details that complement the “source type” column: If it comes from query parameters, WHICH query parameter is it? If it comes from the data layer, what data layer object is it? If it’s a metric, what in the data layer determines when to fire it (for instance, a prodView event doesn’t map directly to a data layer object, but it does RELY on the data layer: we set it whenever the pageType data layer object is set to “product details page”.)

Variable Settings

This is something many SDRs have but can be a pain to keep consistent, because every variable type has different settings options:

- events

- type

- counter (default)

- numeric

- currency

- polarity

- Up is good (default)

- Up is bad

- visibility

- visible everywhere (default)

- builders

- hidden everywhere

- serialization

- always record event (default)

- record once per visit

- use serialization ID

- participation

- disabled (default)

- enabled

- type

- eVars

- Expiration

- Visit (default)

- Hit/Page View

- Event (like purchase)

- Custom

- Never

- Allocation (note: this is less relevant these days now that allocation can be decided in workspace)

- Most Recent (last) (default)

- Original Value (first)

- Linear

- Type

- Text string (default)

- Counter

- Merchandising

- Disabled (default)

- Product Syntax

- Conversion Syntax

- Merchandising Binding Events

- Expiration

- Props

- List Prop

- Disabled (default)

- Enabled

- List Prop Delimiter

- Pathing

- Disabled (default)

- Enabled

- Participation

- Disabled (default)

- Enabled

- List Prop

As you can see, you’d have to account for a lot of possible settings and combinations- I’ve seen some SDRs with 30 columns dedicated just to variable settings. I tend to simplify and just have one column where I only call out any setting that differs from the default, such as “Expires after 2 weeks” or “merchandising: conversion syntax, binds on internal search event, product view, and cart add.”

Classifications

This should list any classifications set up on this variable. This is a good one to keep updated, though I find many folks don’t.

GDPR Considerations

Don’t forget about government privacy regulations! Use this to flag items that will need to be accounted for in your privacy policies and procedures. Sometimes merely having the column can serve as a good reminder that privacy is something we need to consider when designing our solution.

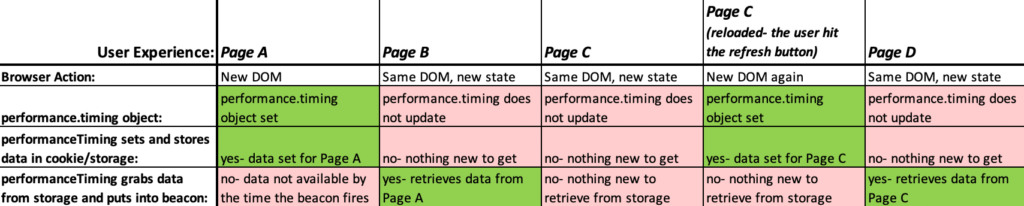

TMS Rule and TMS Element

I find these very helpful in designing a solution, but I’ll admit, they often fall by the wayside after an implementation is launched- and I don’t even see that as a bad thing. Once configured, your TMS implementation should speak for itself. (This will be even more true when Adobe releases enhanced search functionality in Launch.)

Other tabs

Aside from the variable map, I try to always have a tab/sheet for a Change Log. If nothing else, this can help with version control when someone had a local copy of the SDR that got off sync from the “official” one. It also lets you know who to contact if you have questions about a certain change that was made. I also use this to flag which changes have been accounted for in the Report suite Settings (eg, I may have set aside eVar2 for “internal search terms”, but did I actually turn it on in the tool?)

If you have many Report Suites, it may be helpful to have a tab that lists them all- their friendly name, their report suite ID, any relevant URLs/Domains, and perhaps the business owner of that Report Suite.

Also, if you have multiple report suites, you may want to add columns to the variable map or have a whole separate tab that compares variables across suites (the Report Suite exporter and the Observepoint SDR Builder both have this built in).

What works for you?

As I said, I don’t think my template is going to be the ultimate, universal SDR. I’d love to know what has worked for other people- did I miss anything? Is there anything I’m doing that I should do differently? Do you have a template you’d like to share? I’d love to hear from you!